Arctop and the Intelligence Layer for Brain Data: Dan Furman on Decoding the Mind

Arctop CEO Dan Furman on why the future of neurotechnology may depend less on new hardware and more on the software that can finally make brain signals useful.

Dan Furman is CEO of Arctop.

When people think about brain-computer interfaces, they often think first about hardware: implants, headsets, sensors, and futuristic devices. Dan Furman thinks the deeper opportunity sits elsewhere.

As Co-Founder and CEO of Arctop, Furman has spent years focused on the intelligence layer between brain-sensing hardware and the applications built on top of it. His view is that the core bottleneck in neurotechnology is not simplycapturing signals, but decoding them well enough, fast enough, and personally enough to make them useful in the real world.

In this conversation, he reflects on the operating-room moment that first pulled him into neuroscience, why Arctop made an explicit bet on software rather than hardware, what the company believes it can see in brain data that others still miss, and how he thinks cognition-aware computing could reshape health, education, entertainment, and human-computer interaction more broadly.

“ The human brain is constantly talking. Arctop built an ear for it. ”

What originally drew you into the brain, and how did that eventually lead to founding Arctop?

It started in a surgical theatre in Newport Beach, California, when I was 16. I was a research intern under neurosurgeon Dr. Christopher Duma, watching him perform an open-brain procedure on a Parkinson’s patient. Over Dr. Duma’s shoulder, I saw the patient’s brain shimmering under the operating-room lights as the patient was gently awakened from anesthesia and spoke with the surgical team while they located the right place to implant a stimulating electrode.

The brain itself has no pain receptors, so the patient was speaking comfortably while Dr. Duma adjusted the electrode’s electrical output. As he turned the stimulation up and down, the patient’s Parkinsonian tremors got worse, then better, then completely disappeared. That was the moment I understood, viscerally, that the brain is electric. That thought never left me.

I went on to study neurobiology at Harvard. While I was there, the first iPhone came out, and I had this feeling that the intimacy between biological and computational electrical signals was going to define the coming decades. I moved away from the pre-med track, with the intention of following in Dr. Duma’s footsteps, and deeper into advanced technology.

After graduating in 2011, I joined a small medtech startup in San Diego working in sleep technology. Within months, we were adapting the company’s at-home sleep brain-monitoring hardware to serve as a communication device for Stephen Hawking, whose ALS had progressed to the point where even his cheek-click controller was failing him. He did not want to be implanted with anything, so that ruled out the most capable neural-interface systems at the time, and we were chosen as the outside team to try to give him a non-invasive way to continue communicating.

We made meaningful progress, but the technology ultimately never reached the people who most needed it. That gap — between what science could do and what anyone could actually access — became the defining frustration of my early career.

So I went back into research. I did my PhD at theTechnion’s Evoked Potentials Laboratory in Israel and, with Professor Hillel Pratt and collaborators, we achieved something that had not been done before: We used EEG data to decode imagined finger movements accurately, not perfectly, but far beyond chance. That level of granularity had been considered essentially impossible because of how noisy EEG signals are. What it proved to me was that scalp-measured brain signals carry far more information than the field had assumed, and that the real scaling challenge was not the brain’s inaccessibility but the unsolved problem of accurate brain-decoding software. That realization led directly to founding Arctop in the spring of 2016.

For those coming across Arctop for the first time, how do you describe what the company is building today?

At the highest level, Arctop is building the software layer that can listen to the brain in real time and translate those signals into something useful.

The simplest true thing in neuroscience is that every thought you have, every moment of focus, boredom, stress, or excitement, produces tiny electrical signals. The brain is constantly talking. For decades, scientists could record that signal in hospitals and labs with expensive hardware, but it was slow, specialist-driven, and mostly unusable for everyday life.

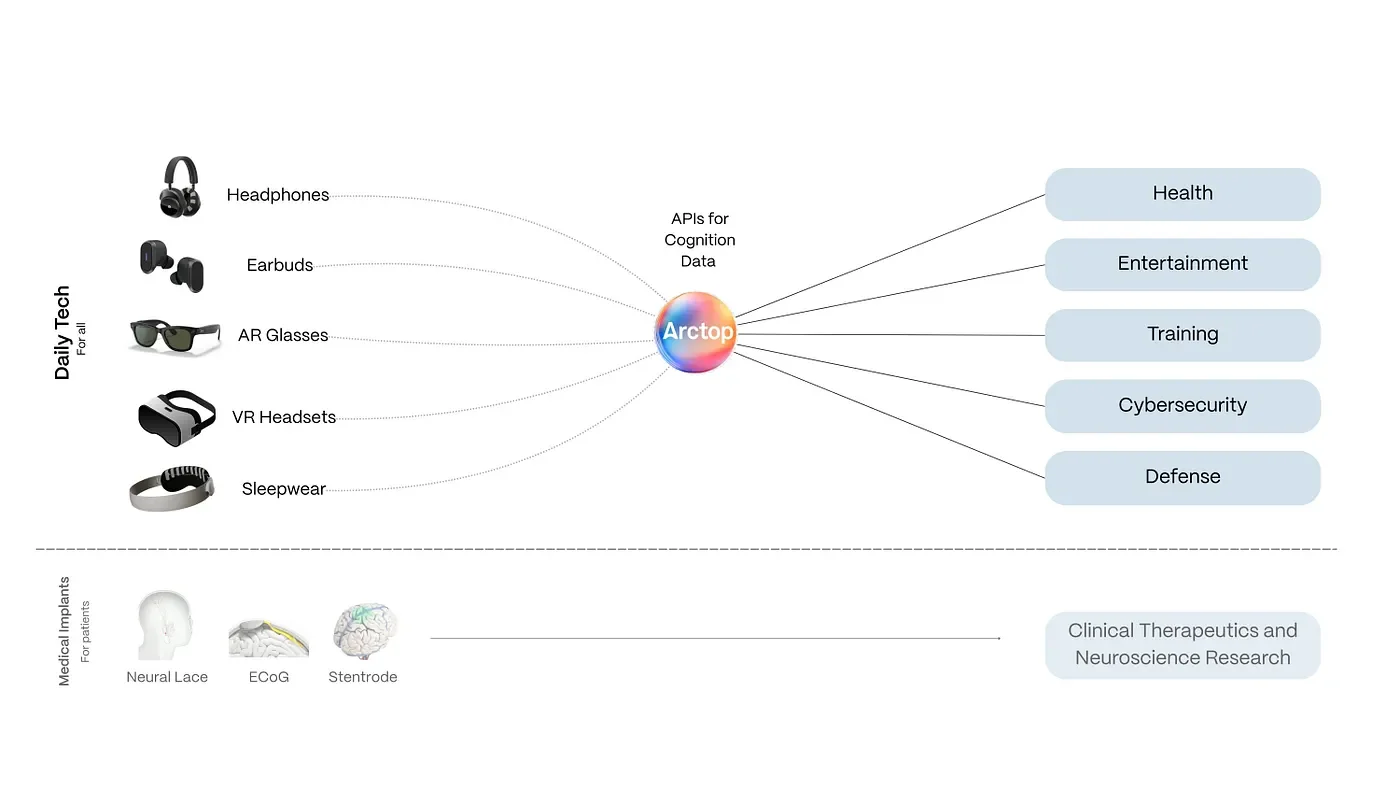

At Arctop, we asked a different question: What if software could listen to that signal in real time through low-cost sensors embedded in headphones, earbuds, glasses, or other head-worn devices, and actually understand what it means? That is the whole company. Our software sits between a wearable device and an app, and translates brain signals into something useful: Is this person focused, bored, stressed, learning, or confused?

I often use this analogy: A thermometer does not understand fever, it just reads temperature. A doctor understands what that temperature means. Arctop is trying to be the doctor for brain electricity, but running in software, in real time.

Arctop aims to leverage multi-source neural data for a range of applications.

That opens up a wide range of applications. A video game could get harder when you are too relaxed and easier when you are overwhelmed. A pilot-training system could adapt what it teaches based on how your brain is actually absorbing information. A music app could shift based on your real mental state rather than what you last clicked.

We license that software to hardware companies and developers building applications across skill training, assistive communication, and adaptive experiences. We do not make the hardware or the end app. We are the intelligence layer in-between, designed to work across different devices and applications.

In one sentence, Arctop is trying to be to brain-reading wearables what iOS was to the iPhone: The software layer that makes the hardware actually mean something.

What we believe we have solved better than most others comes down to the math. We have worked through some of the hardest technical problems in non-invasive sensing, and that allows us to operate at a higher bandwidth of decoding, meaning our software can pull more useful information out of noisy brain data. We have not scaled brain-enhancing technology to millions of people yet — no one has — but what we bring today is an API layer for real-time cognition measurement that helps applications better understand and respond to users. By building around off-the-shelf, non-medical hardware and consumer applications, we also avoid many of the limitations that will keep implant-based systems from reaching very large populations in the near term.

“ If we can reliably decode what’s happening in the brain from non-invasive signals, the entire ecosystem benefits. ”

Arctop has been very explicit about being a software company. In a field crowded with hardware bets, why was that the right call?

The early realization for us was that hardware in this space is fundamentally a distribution problem, and one that many others will solve well. The world is filling up with brain-sensing devices. Competing there did not feel like the right battle.

What all of those devices have in common is that they produce signal data, but most of them do not really understand it. The primary bottleneck is making sense of the data. So we made a deliberate decision to work on the intelligence layer.

If we can reliably decode what is happening in the brain from non-invasive signals, the whole ecosystem benefits. Devices become more valuable. Applications become more capable. End users get more variety and better experiences. That is where we think the real leverage is.

It is similar to how the smartphone ecosystem evolved. A lot of the enduring value accrued to the platforms and software layers that made the hardware useful, not just to the hardware itself. Our goal is to be that connective layer for real-time cognition so developers and companies can build experiences that respond to the user’s brain, and can do so in a trusted way.

There is also a broader philosophical reason behind our choice. If this class of technology is going to matter at scale, it has to be accessible. It has to reach millions of people, not remain confined to hospitals, surgeons, and very expensive systems. By building software that runs on devices people already have, or will have soon, we think we are taking the most direct path toward that future.

What does Arctop see in brain data that others are still missing?

The most important thing our system sees in the data is the individual. A lot of the field has focused on trying to find universal signals, a brain pattern that means focus, stress, or some other state across everyone. Those signals do exist to some extent, but on their own they tend to be relatively weak and unreliable. What really matters is how the signals self-organize within a specific person.

Each brain has its own patterns, almost its own grammar. Our software establishes a cognitive baseline, what we call ‘Brain ID’, and then uses that to interpret the rest of the signal with much more precision. In that sense, we are not just looking at noise or averages, we are modeling the individual and the way that person’s brain conducts and organizes information.

The other thing our software sees differently is time. We are obsessed with timing relationships across signals and data sets. We measure continuously, millisecond by millisecond, which means we can capture the full arc of an experience as it unfolds. That temporal layer changes everything. It brings a kind of granularity at the speed of the nervous system that surveys or after-the-fact reporting simply cannot match.

When you combine individuality and time, weak and fragmented signals start to become clearer, more actionable, and much more useful outside the lab.

The Arctop team.

Where do you think the real breakthroughs in the field have come from in recent years, and what still feels underrated?

It is genuinely the convergence of better sensors, stronger AI, and smarter data pipelines. None of them matters very much in isolation.

You need enough signal quality before AI can do anything useful. Otherwise it is just garbage in, garbage out. Then, once the signal quality is good enough, and good enough in natural environments rather than just in a lab, you need models sophisticated enough to extract structure from it. And beyond that, you need infrastructure that can process and contextualize the data in real time and deliver it reliably to third-party applications that can respond to the user in the moment.

If any one of those pieces is missing, you are left with something that works in a controlled setting but does not translate into the real world.

What has changed most in recent years is the convergence of large-scale neural datasets with modern AI architectures. The models we can train today are fundamentally different. We are able to extract structure from signals that were previously dismissed as too noisy.

What still feels underrated is the personalization problem. Transfer learning, calibration, fine-tuning to the individual, that is where many systems quietly fail. You can have great hardware and strong models, but if you cannot adapt to the person, it does not hold up in real-world use. It is not the flashiest part of the work, but it is often what determines whether the system is actually useful.

“ A lot of the field has focused on trying to find universal signals. The most important thing our system sees in the data is the individual. ”

Finally, where do you think neurotechnology is heading, and what does the world look like if Arctop succeeds?

I cannot say I know exactly what the world will look like, but I do think we are at the beginning of a transition as significant as the one that followed the smartphone.

The smartphone made computing personal, not just professional, and it made information constantly present. The next wave, whether you call it neurotech, brain-computer interfaces, or something else, makes computing aware. It creates a world in which the systems around you can understand who you are in a given moment, what you are feeling, and how you are responding, without you having to constantly click, type, or explicitly explain yourself.

That creates a fundamentally different relationship between people and the systems they use. The closed loop starts to function more like an extension of the nervous system, another layer sitting on top of the physical brain and interacting with the digital world in real time.

In the near term, that shows up in some very practical areas. In health, real-time tracking of cognitive and emotional states opens up new approaches to diagnosis and treatment. In education, static systems become dynamic ones that adapt continuously to how someone is actually learning. In entertainment, music, film, and games become more responsive to the experience of the person in the moment.

None of that works unless there is an underlying layer that makes brain data usable at scale, and that is what we are building at Arctop.

The long-term mission is to create a state-of-the-art intelligence layer that connects head-worn devices and applications in a way that helps us better understand and care for the brain itself. If we get that right, the larger goal is simple: Reduce the amount of suffering tied to the brain, and make something that is often fragile and poorly understood into something we can support and improve over the course of a lifetime.

Also published on Medium via NeuroTechX

Carter Sciences delivers personalized talent strategies backed by 20 years of international headhunting experience. We support growth-stage startups with tailored solutions and cost-effective fee structures, so you can scale without impacting your runway.

Specialisms include: Neurotechnology, Neuromodulation/Stimulation, Brain-Computer Interfaces, Wearable Devices, Neurosurgical Technology, and Private Equity/Venture Capital.

Contact Carter Sciences